Google is a supercomputer.

Not like HAL from “2001: A Space Odyssey,” KITT from “Knight Rider,” or Roy Batty from “Bladerunner.”

But it’s a supercomputer no less, and in rare moments, you can catch glimpses of Google reasoning through a tough decision.

Incidentally, we stumbled on a solid example of this.

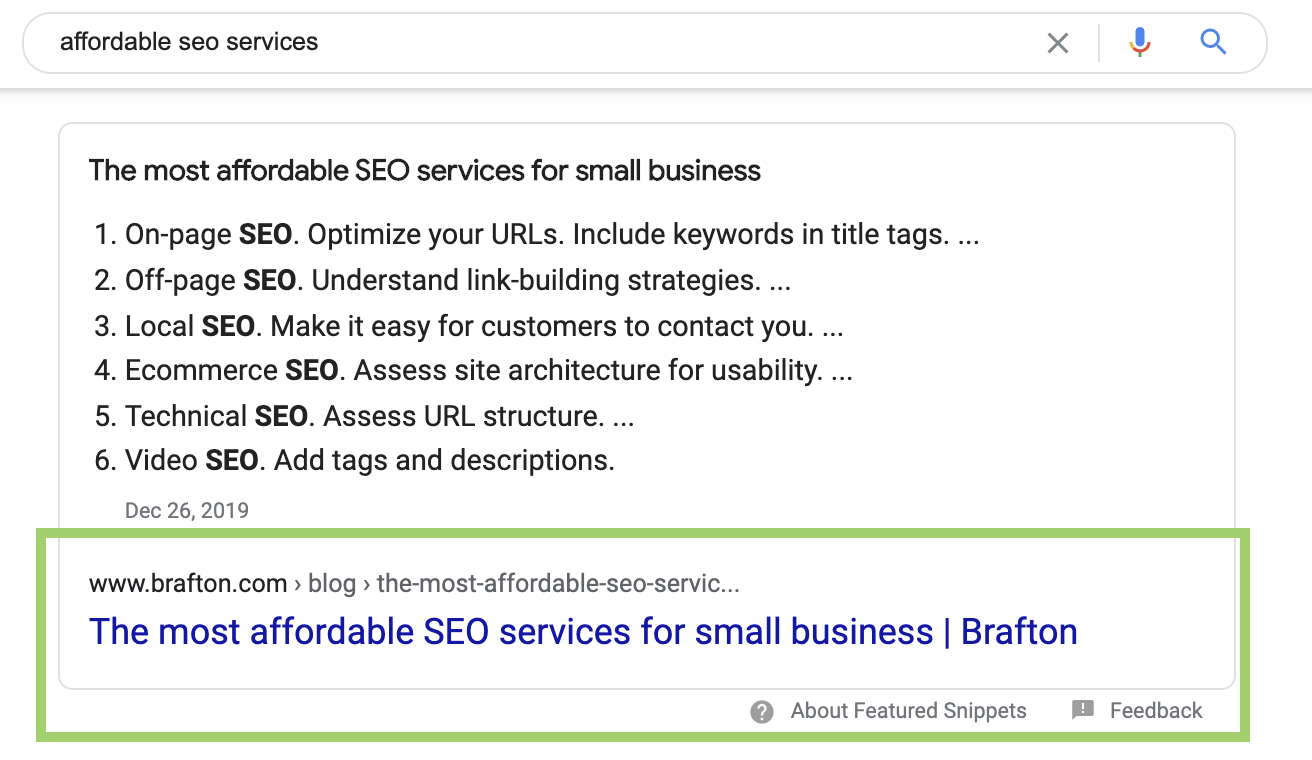

It involves a single keyword (affordable SEO services). We ranked third for this keyword in search results and, by all appearances, we could and should have been ranking first. Upon closer inspection, we thought better of trying to slide into the No. 1 spot. The result of our doing nothing: We earned a featured snippet and saved a few hundred dollars in the process.

It began with a simple content re-optimization exercise

Every month we look at keywords we’re ranking relatively well for, but for which we could rank better.

We use a program called Ryte to find keywords we rank between 3 and 20 for. As we comb through the list, we evaluate those keywords on:

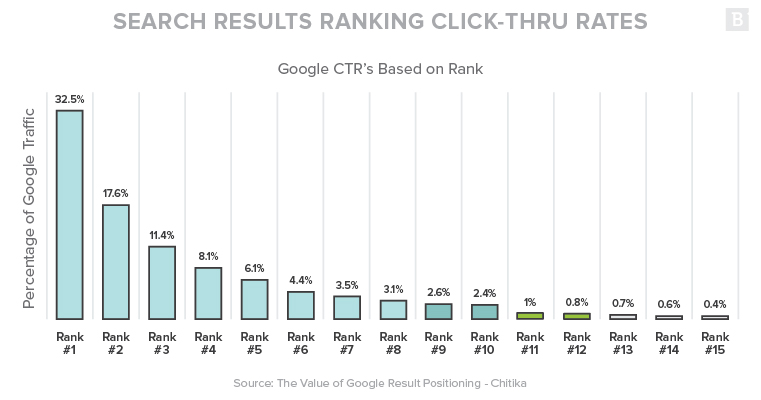

- Search volume and click-through rate: How many clicks can we expect to gain if we get in position 1 for this keyword? We use average estimated click-through rate per position (position 1 usually earns 30% or higher CTR, for instance) to figure this out. 30% of say, 1,000 searches, would mean we can maybe get 300 clicks in position 1.

- Clicks per search (CPS): How many clicks does this search engine result page earn? This is an Ahrefs metric that helps us flag keywords that don’t generate a lot of traffic. Average CTR aside, some keywords just drive fewer clicks than others.

We also check the page ranking for the keyword to make sure it isn’t currently winning for another, higher-value term.

Next, we figure out if our page is within striking distance of the position we’re targeting. We conduct a Search Engine Results Page (SERP) analysis which is a fancy way of saying, “Google the term and see what’s on Page 1.” We assess two things here:

- Competitiveness: Can our website outrank the competition on Page 1? Page Authority as a primary indicator, and Domain Authority as a secondary indicator, are the best measuring sticks. If the sites on Page 1 all have a much higher PA and DA than ours, we’ll struggle to supplant any of them.

- Search intent: Based on the other listings for the keyword, is a searcher a) looking for information or b) shopping? We have little chance of getting a commercial landing page to rank for a keyword that Google believes has informational intent. We can surmise intent by manually looking at the other listings for the keyword.

Finally, we run the page through MarketMuse. This software estimates whether on-page content is optimized to rank for a keyword. If we score low, we know that we can tweak the existing copy to improve our performance in search results.

So what happened?

We came across a keyword, “affordable SEO services,” that one of our blog posts ranked for. At first, a re-optimization looked like a smart play:

- The keyword had high search volume (1,500+ per month).

- Our page was already ranking in position 3.

- Our Page Authority and Domain Authority rivaled or trumped the listings beating us.

- Per MarketMuse, our on-page content score was a 27 out of a recommended 45, meaning we had room for growth with stronger content.

Granted, there were a few warning signs to this keyword. Our CTR of 0.1% was way below what Brafton.com is used to seeing for a position 3 URL on a high-volume keyword.

We also noticed a low CPS of 59%. This means only a little over half of all searches lead to a click. In theory, this cuts our expected average CTR for this keyword in half.

These reservations aside, this keyword looked enticing. Nabbing position 1 and boosting CTR to 16% (half of the usual 32%) would still earn us 240 clicks per month—much better than the 2 or 3 we were getting.

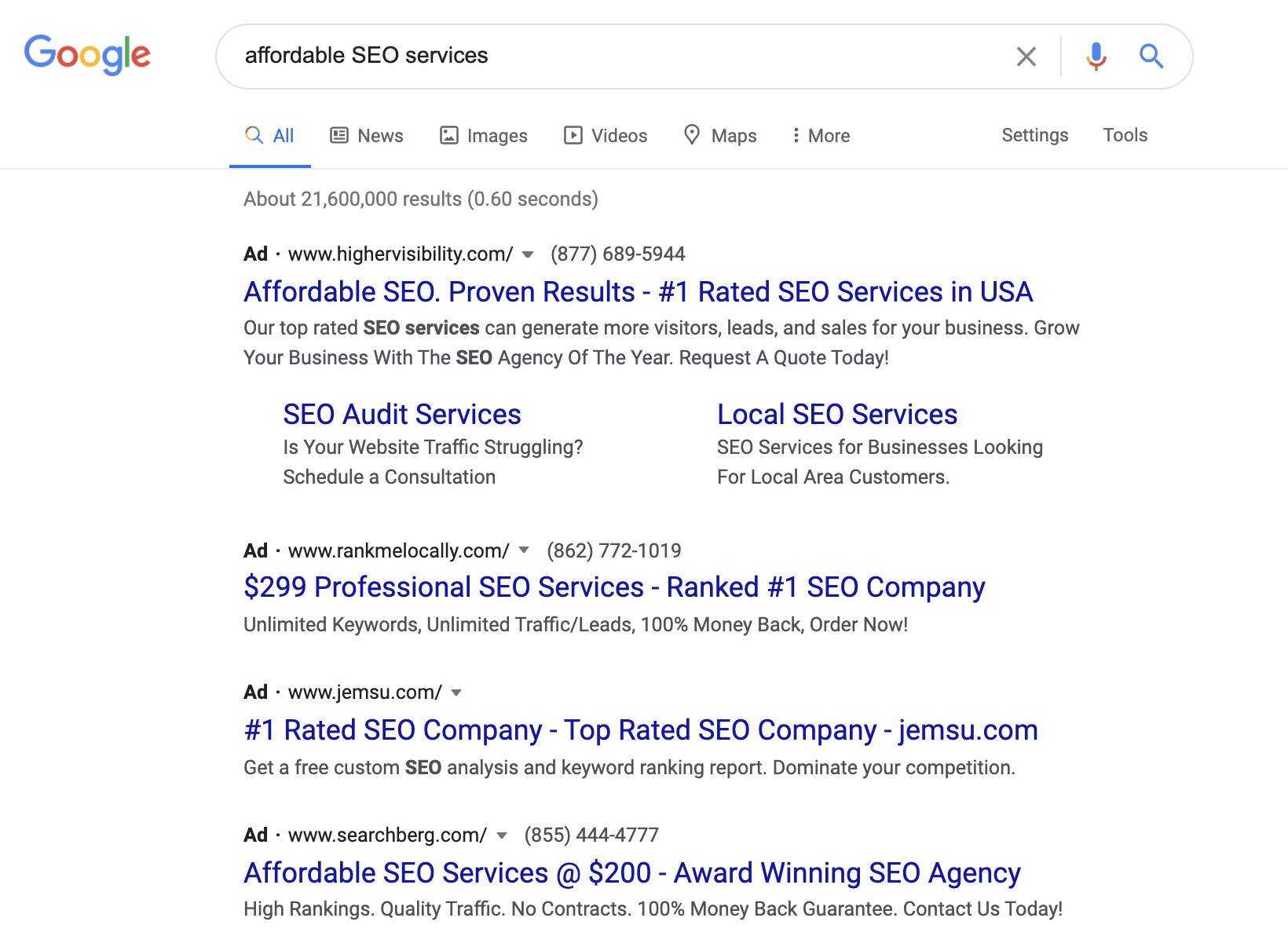

Which brings us to search intent, and to where things got really interesting. The first thing we noticed when searching this keyword is that there were a lot of paid results:

This indicates commercial or buyer’s intent. Scrolling down the page tells a more muddled story.

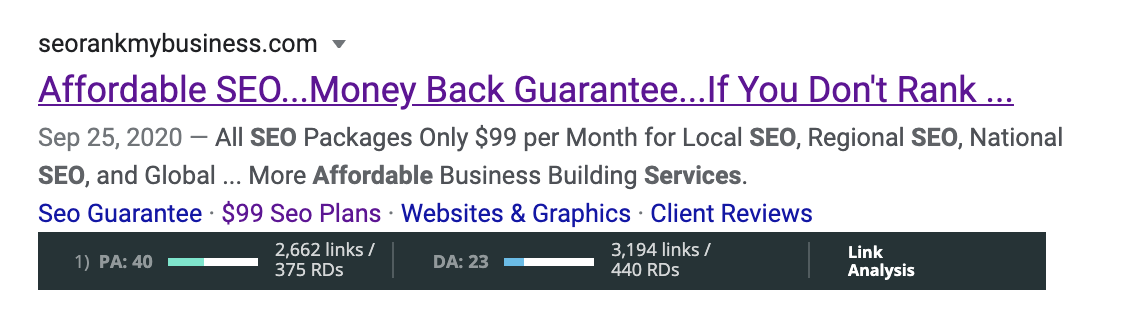

You would expect a site with strong DA to win for a keyword with high search volume. Instead, a site with a DA of 23 (compared to our 62) and 440 referring domains (we have 9,000+) held the No. 1 spot. It’s worth considering that their ranking page was the homepage, which carries more weight.

Still, this site wasn’t the only homepage on the SERP, and it had the lowest DA on all of Page 1 and the fewest referring domains.

To be fair, this site has the third highest PA on Page 1. More importantly, PA and DA don’t guarantee where you rank. We’ve punched above our weight before with exceptional on-page content. Could this just be an example of another site that’s doing the exact same thing?

To be fair, this site has the third highest PA on Page 1. More importantly, PA and DA don’t guarantee where you rank. We’ve punched above our weight before with exceptional on-page content. Could this just be an example of another site that’s doing the exact same thing?

According to MarketMuse’s assessment of the page’s content, the answer was a hard “NO.” The page is scoring 11 out of an average 29, and a recommended 45. This means the copy isn’t optimized for the keyword “affordable SEO services.”

It doesn’t add up.

I wondered: Our page is a blog post. Could that be working against us?

Maybe, but that didn’t seem like a clear enough explanation. There were just as many blog posts on this page as there were landing pages. One blog post even suggested AVOIDING affordable SEO services.

And that’s when I stumbled upon a big clue as to what might be happening here: The blog posts on Page 1 came almost entirely from sites with higher DAs than the landing-pages.

What does that mean?

We can only hazard guesses about why this particular SERP is such a mess.

But this looks like an extreme case of fractured intent.

Google can’t tell if “affordable SEO services” has commercial intent, informational intent, negative sentiment, positive sentiment or comparison shopping. In other words, the world’s largest search engine is confused.

This confusion stems from mixed signals. There’s plenty to indicate commercial intent—paid search results and the words “affordable” and “services.” At the same time, most of the commercial landing pages ranking for this term have very low DA, and the PAs are unimpressive.

To compensate, we suspect Google is adding a few pages from stronger domains to Page 1—pages that have clear informational, or even negative, intent. Those pages, including ours, likely get fewer clicks because they’re not matching commercial search intent.

Just glancing at Page 2, we can see the few blog posts listed have a stronger PA and DA than most of the commercial landing pages present in the SERP:

So what did we do?

Nothing.

Even if we re-optimized this existing blog post to have the best on-page content for “affordable SEO services,” we suspected Google would still question whether it satisfies searcher intent.

We’re happy with this choice, because Google’s din of mixed signals somehow led to us capturing the featured snippet.

Think about that for a second: Featured snippets are purely informational. Their purpose is to provide an answer, not a product. Despite all the paid results on the page, and a commercial landing page in the top organic spot, Google somehow decided that a featured snippet made sense on this SERP.

This hammers home the point: Google can’t figure out what type of content people want to see when they search for “affordable SEO services.”

The great irony here is that we nearly spent a few hundred dollars to re-optimize this page and, had we done it, we may have prevented ourselves from ever getting a featured snippet.

(Disclaimer: By the time you read this, there’s a possibility that we’ll no longer even have the featured snippet given how mixed the intent of this keyword appears to be. We also noticed variation by location, meaning depending on where you are, you may not see us there.)

But wait! A twist

Even with the featured snippet, we’re still seeing a low CTR of 0.2%.

You could make the case that we’re answering the searcher’s question so there’s no need to click.

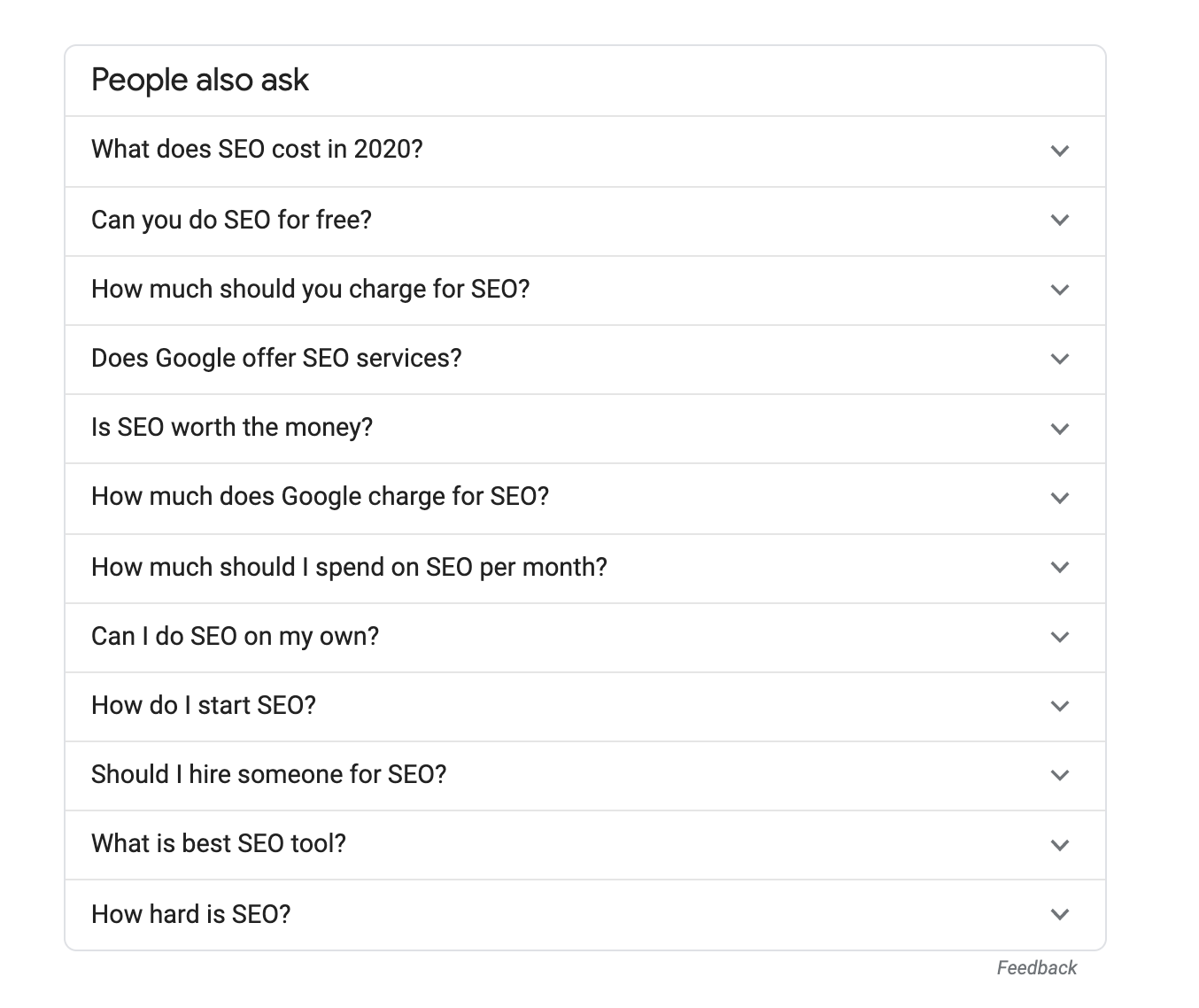

It’s also possible that the “People Also Ask” in-SERP feature is preventing some searchers from clicking into any results:

But that’s unlikely considering how much farther down the page it appears than our featured snippet. Keep in mind, a featured snippet appears at the top of a page and gets an average estimated 8.6% CTR. We are orders of magnitude beneath that.

We know based on CPS that roughly 59% of searches lead to a click. Even if we were getting 8% of those clicks, we would have a 4.3% CTR. For the sake of argument, let’s say the People Also Ask and ads were taking half that traffic. Even then, we should see a 2.1% CTR.

By all appearances, we’ve most likely won a featured snippet as a result of Google’s confusion. The finishing touch on this layer cake of ironies is that it hasn’t materially helped us one way or the other.

A term like “affordable SEO services,” which you would think is right in our ballpark, and clearly within our strikezone, ended up being all but valueless to us.

The final takeaway

Google really is a complex program that can churn out SERPs with tremendous accuracy.

Still, it’s just a program, one that takes its cues from variables that can very much be at odds. As impenetrable as its reasoning may seem at times, there is always a story in the SERPs.

You just need to know how to look for it. At the very least, you might save a few hundred bucks.